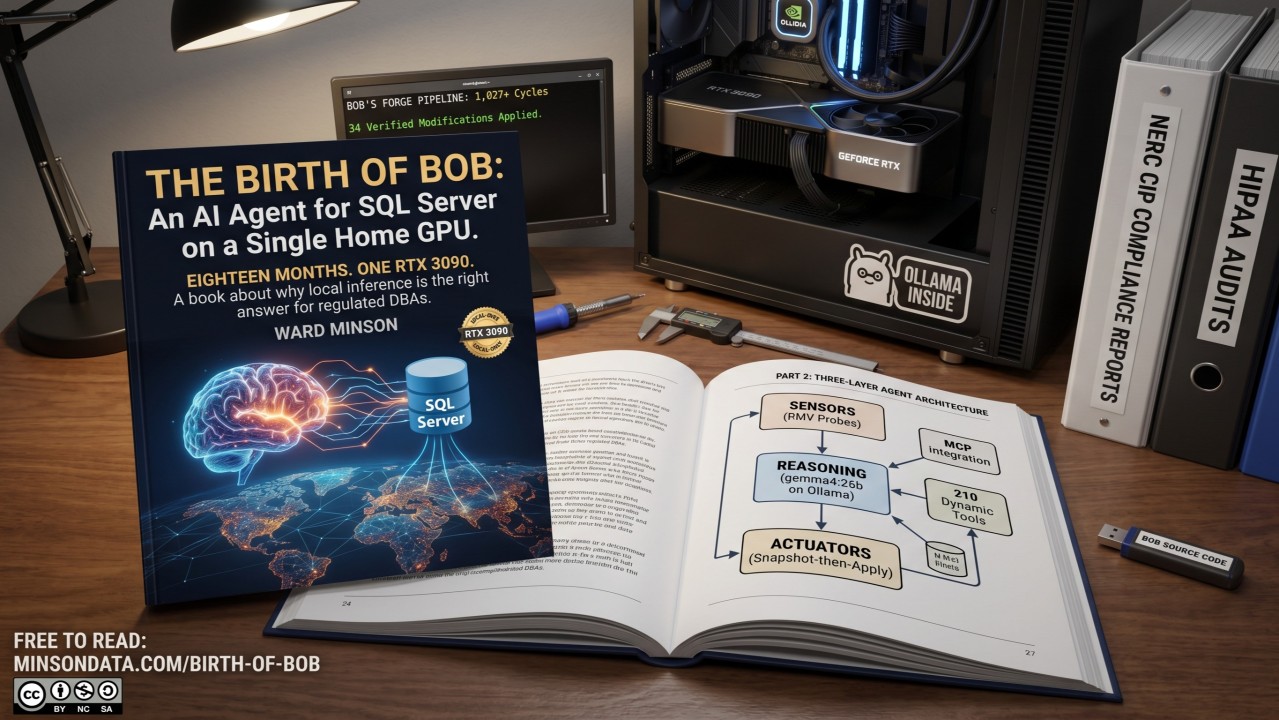

The Birth of Bob: An AI Agent for SQL Server That Runs on a Single Home GPU. The book is up.

Eighteen months. One RTX 3090. A book about why local inference is the right answer for regulated DBAs.

I spent eighteen months building Bob: an autonomous agent for SQL Server self-healing and network monitoring that runs entirely on local hardware. Today, the book about how I built it goes live.

The short version of why I built it: cloud LLMs are wrong for the work.

Most of my career has been SQL Server DBA work. The last several years specifically in regulated environments. NERC CIP at Entergy through ComTec. HIPAA at Lumeris. GDPR and PCI DSS in the deduplication pipelines I run today. In every one of those environments, the data and the schema are the kind of information that cannot leave the network. A wait stat showing query plans against a table called TransmissionLineMonitor is not something you send to OpenAI. The compliance review alone takes a quarter.

But the same DBAs in those environments are the ones drowning in 200-page health reports. The reports are accurate and useless: they record everything and explain nothing. The interpretation gap between the data and the decision is closeable, but the conventional answer — a senior DBA reads the report manually — does not scale, costs money, and leaves the rare interpretive expertise concentrated in a few people.

So I built Bob. The book is the story of how, told in three parts.

The Story in Three Parts

- Part 1: The Origin Story. The 214-page report problem. The shift from clicking through SSMS to vibe coding. Why "AI for DBAs" is a real movement and not a buzzword.

- Part 2: Technical Implementation. Local Ollama on a Ryzen 9 + RTX 3090. A three-layer agent: sensors that probe DMVs, a reasoning layer running gemma4:26b, and actuators with a snapshot-then-apply discipline that has caught more than one bad fix before it landed. MCP as the universal capability bridge: 24 inline tools plus 210 dynamic tools from 70 feature modules, with mcp_call as the gateway to 43 MCP servers.

- Part 3: Future Roadmap. Toward genuine self-healing (Bob's Forge pipeline already self-modifies: 1,027+ cycles, 34 verified modifications applied to the reasoning function while the agent was running against it). Scaling sovereignty for digital agencies. What the AI-for-DBAs community is for, and how to join.

What's Inside

The whole thing runs on a single RTX 3090 on a home lab. No external API calls during inference. Query plans never leave the LAN.

- The PDF is 69 pages.

- The EPUB is 91 KB.

- The full site (with rendered Mermaid diagrams of every architectural piece) is free to read: minsondata.com/birth-of-bob

(CC BY-NC-SA 4.0 on the manuscript. MIT on the code samples.)

Where We Go From Here

- Join the conversation. This newsletter is one half of the AI for DBAs community. The other half is a working group on Skool. Both are where prompt templates, war stories, and the patterns that did not fit in the book live.

- Send your war stories. A wait-stats pattern I missed. A configuration that breaks Bob in a way I did not anticipate. An extension you want to build. Failures are as useful as wins, and the next version of this book will include patterns from readers who actually run this in production.

- If you are an investor. I am actively looking for backing to take Bob from a home lab project to a productized agent for managed-services providers and regulated-industry data teams. The architecture is proven. The stack is open. The market is every DBA who cannot send their query plans to a cloud API.

Twenty years of DBA work taught me that systems do not maintain themselves. Someone has to care enough to be awake at 3 AM with a flashlight and a checklist. Bob is that someone, for one specific class of problems.

Originally published on the AI for DBAs newsletter on LinkedIn.